Integration Layer - Data Normalization & Consolidation

Integration Layer: Core Idea & Novel Contributions

What is the Integration Layer?

The Integration Layer takes data from the Source Layer in all its messy diversity and transforms it into a standardized, consolidated format that downstream systems can work with.

Core Responsibilities

1. Schema Standardization

Challenge: Each data source uses different field names, units, and structures

- Salesforce calls it “Employees”: 500

- HR system calls it “Headcount”: 5.0E+2 (scientific notation)

- CSV calls it “Staff_Count”: “approx 500”

Solution: Map all sources to canonical ESGETC schema

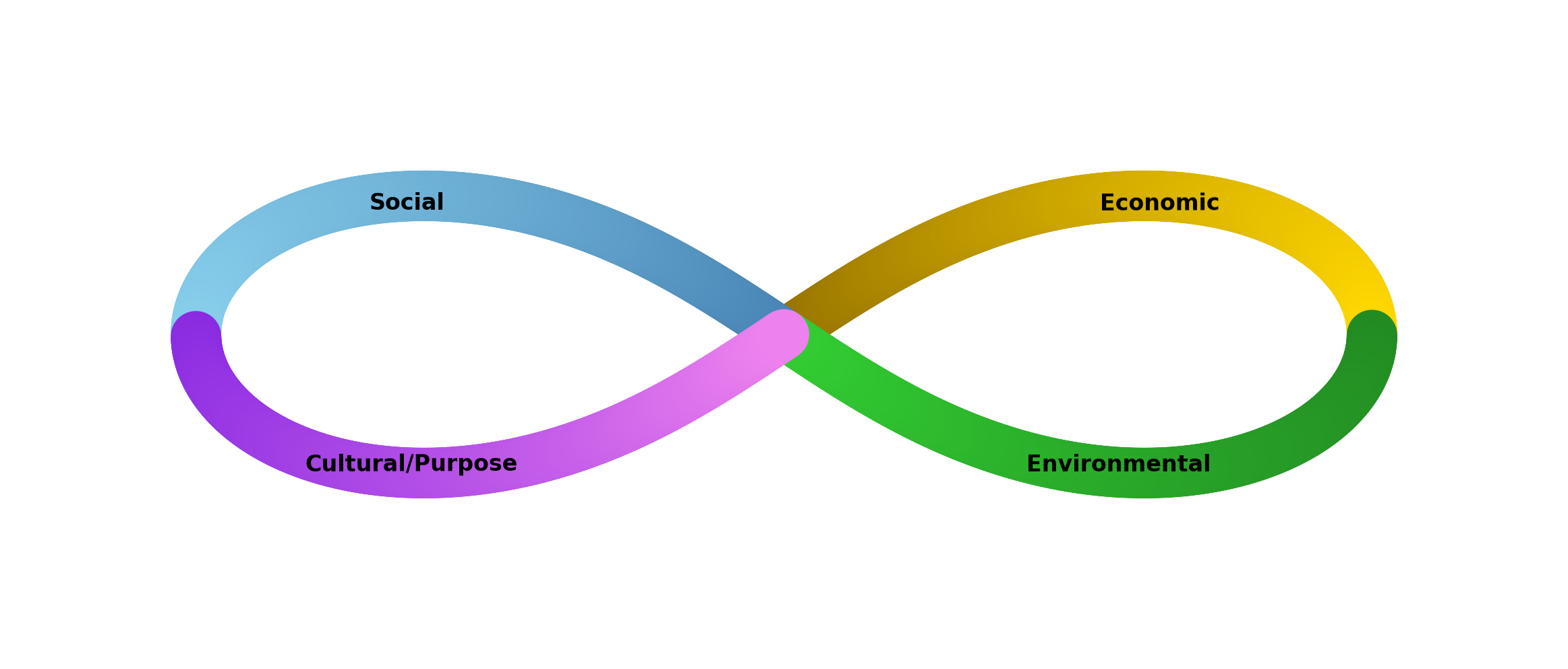

interface StandardizedDataPoint { // Identification organizationId: string; dimension: 'ECONOMIC' | 'SOCIAL' | 'ENVIRONMENTAL' | 'GOVERNANCE' | 'TECHNOLOGICAL' | 'CONNECTEDNESS'; indicator: string; // Canonical indicator name

// Value value: number; valueType: 'ACTUAL' | 'TARGET' | 'TREND' | 'INDEX'; unit: string; // Normalized (employees, tons, percentage, etc)

// Context timeperiod: { start: Date; end: Date; frequency: 'DAILY' | 'WEEKLY' | 'MONTHLY' | 'QUARTERLY' | 'ANNUAL'; };

// Quality & Lineage sourceSystem: string; collectionMethod: 'SURVEY' | 'API' | 'IoT' | 'FILE_UPLOAD' | 'MANUAL'; qualityScore: number; // 0-100% provenance: { originalValue: string; transformationApplied: string; transformedBy: string; transformedAt: Date; };

// Relationships geoLocation?: { country: string; region?: string; city?: string; coordinates?: [number, number]; };

metadata: Record<string, any>;}2. Unit Conversion

Converts all measurements to standard units:

| Category | Conversions Supported |

|---|---|

| Energy | kWh, MWh, BTU, Joules, natural gas units |

| Mass | kg, tonnes, lbs, oz |

| Volume | m³, gallons, liters, acre-feet |

| Distance | km, miles, meters |

| Time | hours, days, months, years |

| Money | USD, EUR, GBP, JPY, etc (with FX rates) |

| Concentration | ppm, ppb, mg/L, ug/m³ |

3. Data Deduplication

Problem: Same organization appears in multiple sources

- “Apple Inc” from Company database

- “Apple” from survey response

- “AAPL” from stock data

- “Apple Inc.” from Wikidata

Solution: Pairwise matching algorithm

- Fuzzy string matching (handles typos, abbreviations)

- Geographic proximity (entities in same location likely same org)

- Relationship inference (if both reference same parent company)

- Manual verification (flagged for human review)

Result: Single unified org record with all data merged.

4. Time Series Alignment

Consolidates data with different collection frequencies:

Source A (quarterly): Q1 --- Q2 --- Q3 --- Q4Source B (monthly): M1 M2 M3 M4 M5 M6 M7 M8 M9 M10 M11 M12Source C (IoT continuous): ████████████████████████████

Unified model: Store all data at finest granularity (daily)Last-value-carry-forward for sparse sourcesInterpolation available for time-series models5. Geographic Harmonization

Aligns geographic references:

- “New York” → State code plus city

- “USA” → Country code

- Coordinates (39.7817, -89.6501) → Administrative hierarchy

- “Springfield, IL” → Standardized to: USA/IL/Springfield

Creates unified geographic schema enabling:

- Regional aggregation

- Distance calculations

- “50-mile radius” queries

- Heat mapping

Novel Contributions

1. Federated Data Model

Unlike traditional data warehouses that force normalization upfront, we maintain multiple simultaneous schemas:

- Canonical Schema: Standardized ESGETC model

- Source Schema: Original data kept as-is

- Transformed Schema: Intermediate transformations

Benefits:

- No data loss through normalization

- Audit trail preserved (how we got from original to canonical)

- Flexibility to maintain source-specific context

- Reversible transformations for data recovery

2. Intelligent Default Assumptions

When data is incomplete, the system makes intelligent assumptions:

Pattern Recognition

- If organization reported $5M revenue for 3 years, trends upward → estimate next month

- If competitor has 20% employee turnover, similar company likely has similar rate

- If region has $X per-capita income, adjust individual org benchmarks

Contextual Inference

- If org is in renewable energy sector, environmental dimension likely more important

- If NGO operates in rural Kenya, social dimension likely most material

- If manufacturer in California, governance dimension affected by state regulations

Conservative Default

- When in doubt, flag for human review

- Use weighted averages (trust good data more than estimated)

- Confidence intervals provide uncertainty bounds

3. Adaptive Consolidation Levels

Customize integration depth per organization type:

Quick Integration (Small NGO, 20 hours effort)

- Basic CSV upload

- Manual data entry for key fields

- No external system connections

Standard Integration (Mid-size company, 40 hours)

- One ERP system connected

- Quarterly data refresh

- Semi-automated

Deep Integration (Large enterprise, 100+ hours)

- Multiple systems (ERP, CRM, HR, IoT)

- Real-time or daily sync

- Advanced transformations

4. Supply Chain Traceability

Goes beyond simple data transformation to track lineage:

- Which original field came from which source system

- What transformation was applied

- When the transformation was applied

- Who approved the transformation

- What is the confidence level in the result

Enables:

- “Why is my economic score 65?” → See exact calculation

- Data quality investigation (trace bad data to source)

- Audit compliance (full provenance trail)

- Reproducibility (exactly replicate transformation)

Technical Architecture

ETL Pipeline

Extract → Transform → Load → Validate ↓ ↓ ↓ ↓Source Normalize UnifiedDB QualityCheckSystems + Dedupe ↓ + Harmonize Analytics DB + Enrich ←── Reference DataReference Data Management

Central repository of standardized values:

├─ Organizations (Wikidata, World Bank)├─ Geographic Hierarchies (country/region/city)├─ Economic Sectors (NAICS, ISIC codes)├─ Sustainability Indicators (GRI, SASB, SDG targets)├─ Unit Conversions (energy, mass, distance)├─ Foreign Exchange Rates (updated daily)└─ Benchmarks (industry averages by size/sector/region)Data Quality Pipeline

Input → Validation → Scoring → Decision ├─ Type ├─ Range ├─ Format ├─ Uniqueness └─ Referential

Score ≥ 80% → Auto-accept to Analytics DBScore 50-80% → Flag for reviewScore < 50% → Reject, request data re-submissionIntegration Patterns

Pattern 1: Real-Time Streaming

For high-frequency data (IoT, financial):

Data Source → Kafka → Stream Processor → Cache → Dashboard(1000x/sec) (buffer) (validate/enrich) (seconds latency)Pattern 2: Batch Processing

For periodic updates (financial reports, HR systems):

Data Source → Scheduled Job → Transform → Database(daily/weekly) (cloud function) (SQL)Pattern 3: API Polling

For external APIs without webhooks:

Schedule → API Call → Rate Limit → Transform → Store(hourly) (Salesforce) (queue) (mapping)Pattern 4: File Uploads

For user-submitted data:

Upload → Virus Scan → Parse → Validate → Queue → Process(CSV) (ClamAV) (format) (schema) (async) (transform)Supported Transformations

Automatic

- Unit conversion (BTU → kWh)

- Date/time harmonization (parse formats)

- case normalization (ABC Corp → ABC Corp)

- Whitespace trimming

Semantic

- Sector classification (code → NAICS category)

- Geographic mapping (“California” → USA/CA)

- Currency conversion (JPY → USD)

- Percentage/basis point conversion

Statistical

- Outlier detection and handling

- Missing value imputation

- Scaling/normalization for comparisons

- Index calculation

Custom

- Organization-specific business logic

- Domain-specific calculations

- Custom mapping tables

- Plugin architecture for advanced transformations

Best Practices

1. Start Simple

Begin with basic CSV, add integrations gradually as needs grow.

2. Document Assumptions

Every transformation should be explicit and documented.

3. Validate Early

Check data quality at each step, not just at the end.

4. Preserve Lineage

Never lose track of how data was transformed.

5. Test Transformations

Before applying to production data, test on samples.

6. Version Control

Keep transformation code in Git, enable rollback if needed.